The proliferation of Artificial Intelligence (AI) at the edge is revolutionizing industries by enabling real-time decision-making, reducing latency, and enhancing data privacy. However, this transformation with AI at the Edge comes with an urgent requirement for efficient power management.

As edge devices often operate under stringent energy constraints and are deployed in remote or mobile environments, balancing performance with energy efficiency becomes a critical design challenge.

This article explores the essential principles of power management for AI at the edge, the design challenges, strategies for optimizing power in data centers, and the evolving trends shaping the future of edge intelligence.

1. What is Power Management?

1.1 Definition and Scope

Power management refers to the strategies, techniques, and technologies used to regulate and optimize energy consumption in electronic systems.

It encompasses both hardware and software solutions aimed at minimizing power usage while maintaining optimal performance.

In the context of edge AI, power management is vital for ensuring longevity, reliability, and operational efficiency of devices that often have limited energy resources.

1.2 Power Management Techniques

Modern systems employ a variety of power management methods:

- Dynamic Voltage and Frequency Scaling (DVFS): Adjusts the voltage and frequency based on computational load to save energy.

- Power Gating: Shuts off power to idle circuits, reducing static power consumption.

- Sleep/Idle States: Devices enter low-power states during inactivity.

- Energy Harvesting: Uses ambient energy sources like solar, vibration, or RF to power devices.

1.3 Importance Across Devices

Power management is not confined to edge devices. It extends to mobile phones, embedded systems, and large-scale data centers. Each use case demands a tailored approach:

- Mobile/IoT Devices: Require ultra-low power modes.

- Servers/Data Centers: Focus on thermal efficiency and energy distribution.

- Edge AI Systems: Need adaptive power strategies that balance computation and energy draw.

2. Importance of AI at the Edge

2.1 Understanding Edge AI

Edge AI refers to the deployment of artificial intelligence models directly on devices that are located physically close to the data source. Unlike traditional cloud-based AI systems, edge AI processes data locally, reducing the need to send information to distant servers.

2.2 Advantages of Edge AI

- Reduced Latency: Enables real-time responses without network delays.

- Enhanced Privacy: Keeps sensitive data on the device.

- Bandwidth Optimization: Limits the volume of data transmitted to the cloud.

- Offline Operation: Functions independently of internet connectivity.

2.3 Applications Driving Growth

- Smart Cities: Real-time traffic management, surveillance, waste control.

- Autonomous Vehicles: Immediate processing for navigation and obstacle avoidance.

- Industrial IoT: Predictive maintenance, real-time quality control.

- Healthcare Monitoring: Wearable devices for patient monitoring.

- Smart Agriculture: Crop health monitoring and precision farming.

3. Design Challenges for Power Management for AI at the Edge

3.1 Hardware Constraints

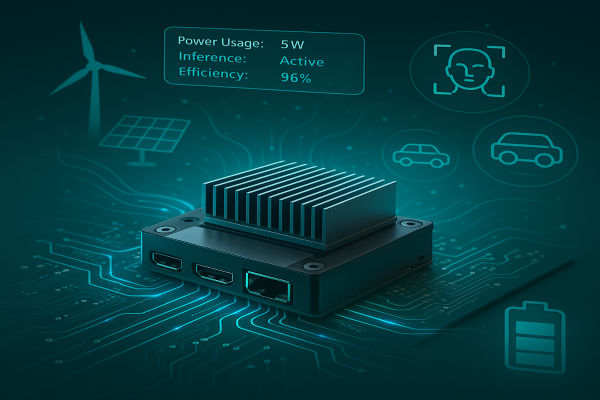

Edge devices often have small form factors and are powered by batteries, making thermal dissipation and energy consumption major concerns. High-performance AI tasks like deep learning inference are power-intensive, creating a mismatch between processing needs and available energy.

3.2 Real-Time Processing Needs

AI at the edge must deliver timely insights, requiring consistently high performance. Maintaining real-time capabilities under limited power budgets necessitates highly optimized hardware and software.

3.3 Integration with Energy-Efficient Architectures

Modern edge AI devices leverage heterogeneous computing platforms combining CPUs, GPUs, DSPs, and dedicated AI accelerators:

- NVIDIA Jetson: Combines GPU acceleration with power efficiency.

- Google Coral: Edge TPU offers high-speed inferencing with minimal power.

- Intel Movidius: Enables computer vision with low power draw.

3.4 Environmental and Operational Conditions

Edge devices are frequently deployed in remote or harsh environments. They must withstand temperature fluctuations, physical stress, and unstable power supplies. Power management strategies must adapt to these variables to ensure continuous operation.

4. Low Power Consumption with the Right Power Management for Data Centers

4.1 Data Centers and the AI Boom

AI training and inference tasks consume significant computational resources, increasing power consumption in data centers.

The rise of edge computing doesn’t negate the importance of data centers; instead, it emphasizes the need for efficient collaboration between centralized and distributed systems.

4.2 Strategies for Power Optimization in Data Centers

- Server Virtualization: Increases resource utilization and reduces energy waste.

- AI Accelerators: Custom chips like ASICs and FPGAs are more power-efficient than general-purpose CPUs.

- Smart Cooling Systems: Use AI and sensors to optimize thermal conditions.

- Renewable Energy Integration: Solar, wind, and hydroelectric power reduce carbon footprints.

4.3 The Role of Edge Data Centers

Edge data centers act as intermediary hubs, reducing the load on core data centers. They handle localized data processing, minimizing long-distance transmission. These centers are smaller, more agile, and designed for high energy efficiency.

5. Trends Shaping Power Management for AI at the Edge

5.1 AI-Assisted Power Management

AI models can now optimize their own power consumption using predictive analytics. These systems learn usage patterns and adjust power settings dynamically to save energy without compromising performance.

5.2 Specialized Low-Power AI Hardware

A new generation of AI chips is designed with power efficiency as a core feature:

- ARM Cortex-M55: Targets microcontrollers with AI capabilities.

- Apple Neural Engine: Executes on-device machine learning efficiently.

- Hailo-8: Delivers high-performance AI at sub-2W power consumption.

- Syntiant NDP: Ultra-low-power voice recognition processors.

5.3 Federated Learning and Decentralized AI

Federated learning reduces data transfer by training AI models locally across multiple devices. This approach enhances privacy and reduces the energy costs of data movement, aligning well with low-power edge deployments.

5.4 Energy Harvesting and Battery-Free Devices

Innovations in power sourcing are enabling devices that operate without traditional batteries. Techniques include:

- Solar Harvesting: Common in environmental monitoring.

- RF Energy Scavenging: Captures ambient radio waves.

- Piezoelectric Vibration Harvesting: Converts mechanical energy into electricity.

5.5 Software-Level Innovations

Lightweight AI models and smarter operating systems are enabling better power control:

- TinyML: Brings machine learning to microcontrollers.

- Event-Driven Architectures: Reduce power use by operating only during data events.

6. Future Outlook

6.1 Toward Sustainable AI Infrastructures

The industry is shifting toward energy-aware AI systems. Future models will prioritize energy efficiency alongside accuracy and speed. Energy metrics may become key performance indicators.

6.2 Policy and Regulation

Governments and industry bodies are implementing energy efficiency standards to curb digital infrastructure’s carbon impact. Compliance will drive innovation in power-efficient AI design.

6.3 Collaboration and Ecosystem Evolution

The future of power management lies in ecosystem-level collaboration. Semiconductor firms, AI researchers, hardware manufacturers, and cloud providers must work together to build interoperable, sustainable AI platforms in which Power Management for AI at the Edge plays a crucial role.

Conclusion

Power management is no longer a secondary consideration—it is central to the future of AI at the edge. As real-time intelligence becomes essential in everything from smart homes to industrial systems, energy efficiency will define success.