Analog Devices AutoML for Embedded co-developed with Antmicro and integrated into ADI CodeFusion Studio to make machine learning easier for embedded engineers.

AutoML for Embedded, an open-source Visual Studio Code plugin for speeding up edge AI development, was just made generally available by Analog Devices. The technology, which was co-developed with Antmicro and integrated into ADI CodeFusion Studio, is designed to make machine learning easier for embedded engineers, particularly those who work with microcontrollers that have limited resources.

Fitting complex machine learning models onto hardware with stringent memory and processing limitations is the biggest obstacle to creating intelligent edge devices.

Deep ML Expertise With ADI’s CodeFusion Studio

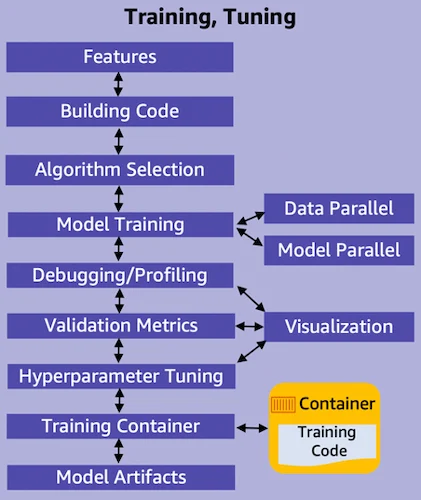

Deep ML expertise is frequently needed for tasks like deployment-specific optimization and architecture tuning. By automating the entire machine learning pipeline and staying open-source and hardware-agnostic, ADI’s new plugin aims to dismantle these obstacles.

AutoML for Embedded targets deployment on edge AI microcontrollers and interfaces seamlessly with ADI’s CodeFusion Studio; it initially supports ADI’s MAX78002 and MAX32690.

The tool abstracts hardware details by utilizing the Kenning framework. Developers may prototype and assess models in real time because it works with both Zephyr RTOS settings and Renode-based simulations.

In its most basic form, the plugin uses a mix of hyperband with successive halving and sequential model-based algorithm configuration (SMAC) to automate model search and optimization.

Model Validation with Stringent Memory

By using a hybrid method, AutoML takes into account a variety of architectures and hyperparameters while effectively allocating training resources to prospective candidates.

Model validation is a component of the pipeline that guarantees the finished model complies with the stringent memory requirements set by the target edge device.

Through Kenning’s reporting tools, the solution offers performance metrics and benchmarking in addition to optimization. These contain measurable parameters on accuracy, memory footprint, and inference time that assist designers in choosing the optimal model for particular deployment restrictions.

Hardware limitations and a large parameter search area make manually fine-tuning machine learning models for embedded systems are time-consuming and technically challenging procedure.

Because embedded platforms frequently have limited computing, non-volatile storage, and RAM, even fairly sophisticated models created for desktop or cloud contexts need to be drastically reorganized or pruned before being deployed.

On the heels, it takes a great deal of experimentation and domain knowledge to adjust hyperparameters like learning rate, batch size, optimizer selection, and regularization strength.

Every permutation needs to be trained and verified, which frequently entails lengthy cycles of try and error. Tuning can reduce power consumption, inference delay, and memory footprint for embedded deployments—all of which are mutually exclusive.

Search for Extensive Tools and Debugging Assistance

Furthermore, the extensive tools and debugging assistance seen in server-class environments are absent from the majority of embedded platforms.

Developers frequently switch between simulation and real hardware testing, which causes lag and lengthens turnaround times. Another level of complexity is added by managing runtime environments and toolchains unique to each platform.

Additional tuning loads are introduced by model compression and quantization, which are essential for embedded practicality.

Carefully balancing these modifications is necessary to maintain accuracy while shrinking the model. However, the best compression approach differs depending on the hardware target, architecture, and dataset, making automation challenging without specific knowledge.

Toolset with AutoML for Embedded

ADI and Antmicro provide a toolset with AutoML for Embedded that connects machine learning experimentation to deployment realities. GitHub and the Visual Studio Code Marketplace now offer AutoML for Embedded.