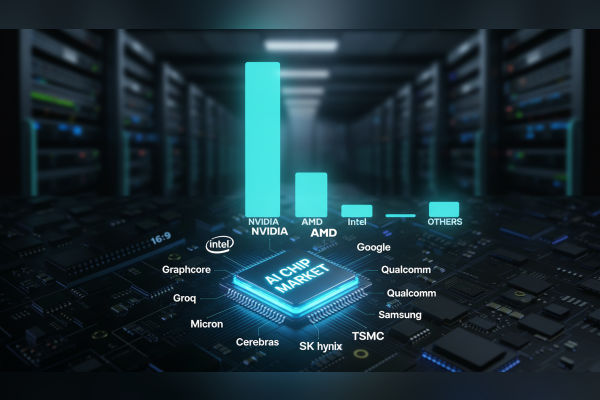

The AI Chip Boom is projected to drive the market from $203 billion in 2025 to $565 billion by 2032, creates unprecedented challenges in supply chains, energy demands, and rapid obsolescence. NVIDIA dominates with its GPUs powering most AI training, but competitors like AMD, Intel, and startups strain against bottlenecks that threaten the entire ecosystem. As data centers multiply for generative AI, these issues risk derailing innovation and inflating costs globally.

Supply Chain Bottlenecks Strangle Production

AI accelerators devour high-bandwidth memory (HBM), GPUs, and SSDs, leading to 6-12 month lead times from suppliers like SK Hynix, Samsung, and Micron operating at full capacity.

TSMC, holding 70% foundry market share, faces massive shortfalls in 5nm, 3nm, and 2nm nodes, prioritizing long-term contracts from NVIDIA while turning away others. Samsung trails with just 7% share, struggling to secure advanced orders despite heavy investments.

Second-tier suppliers for substrates and photomasks exacerbate delays, as AI workloads grow 25-35% yearly through 2027.

Tariffs and geopolitical tensions further widen gaps, forcing smaller AI firms to compete with giants like Google and Microsoft for dwindling supplies. This memory chip crisis spills over, hiking prices for smartphones and PCs as manufacturers divert focus to AI priorities.

Challenge |

Impact |

Key Players Affected |

| HBM Shortages | 6-12+ month lead times | SK Hynix, Samsung, Micron |

| Advanced Node Capacity | Insufficient 3nm/2nm output | TSMC (70% share), Samsung (7%) |

| Packaging Constraints | CoWoS-style delays | TSMC customers like NVIDIA |

Power Consumption Explodes Beyond Grid Capacity

Amid AI Chip Boom, NVIDIA’s Blackwell B200 gulp up to 1,200W per unit, with GB200 systems hitting 2,700W which is a 300% jump in one generation.

Data centers could consume 500 TWh by 2027, rivaling national grids and leaving chips idle in warehouses due to “warm shell” shortages—facilities lacking power hookups. Tech firms stockpile hardware but face 5-year delays for electricity infrastructure.

AMD’s MI300X needs 750W, Intel’s Falcon Shores 1,500W, pushing operators toward nuclear, renewables, and GaN/SiC for efficiency.

On-device AI emerges as a fix, slashing energy 100-1,000x by avoiding cloud data transfers, but cloud dominance persists. Grid constraints invert supply chains: chips ready, but undeliverable without energy.

Rapid Obsolescence Risks $400B Investments

Complementing the AI Chip Boom, new AI chip launches in every 18-24 months, depreciating prior generations before deployment amid power delays.

NVIDIA’s relentless pace leaves older GPUs viable only for inference, not training, stranding billions in inventory. Firms over-order to beat lead times, only to face irrelevance as Qualcomm pushes edge AI and Cerebras/Graphcore target specialized workloads.

This creates dual risks: Under-supply misses AI booms, over-supply yields obsolete stock amid unpredictable adoption. Bain warns of looming shortages absent diversified chains.

Key Companies in the High-Stakes Race

Key Companies in the High-Stakes Race

NVIDIA leads with 80-90% training market share via H100/B200 GPUs, but faces power scrutiny. AMD challenges with MI300X accelerators, securing HBM deals. Intel pushes Gaudi 3 TPUs and Falcon Shores despite foundry lags.

Company |

Key Products |

Strengths/Challenges |

| NVIDIA | H100, B200 GPUs | Market leader; power hog |

| AMD | MI300X | Cost-competitive; HBM ramp-up |

| Intel | Gaudi 3, Falcon Shores | TPU focus; process delays |

| TPUs | Cloud-optimized; custom silicon | |

| Qualcomm | Cloud AI 100 | Edge AI strength |

| Startups (Cerebras, Groq, Graphcore) | Wafer-scale chips, IPUs | Niche innovation; scaling hurdles |

Samsung and SK Hynix battle HBM dominance, while Huawei eyes restricted markets. TSMC remains the linchpin, but expansion costs $20-75B per fab with decade-long ROIs.

Talent and Cost Barriers Slow Innovation

Skilled AI-hardware engineers remain scarce, with firms citing talent gaps alongside regulations. Design/manufacturing costs soar, demanding $400B investments vulnerable to flops. Governments push incentives, but timelines lag AI’s pace.

Skilled AI-hardware engineers remain scarce, with firms citing talent gaps alongside regulations. Design/manufacturing costs soar, demanding $400B investments vulnerable to flops. Governments push incentives, but timelines lag AI’s pace.

Edge devices and custom ASICs from Qualcomm/Apple promise relief via efficiency, yet cloud-scale training sustains core pressures. As AI frenzy reshapes globals chains, only resilient players will thrive amid these interlocking crises.